The Exploration and In-Space Services (ExIS) Division at the NASA Goddard Space Flight Center (GSFC) is developing technology for on-orbit robotic servicing of satellites, using human operators located on Earth to control the remote robot. Much of our effort has focused on one crucial step in on-orbit refueling, which is to gain access to the satellite’s fuel ports by removing a portion of the multilayer insulation (MLI) that protects the outside of the satellite body.

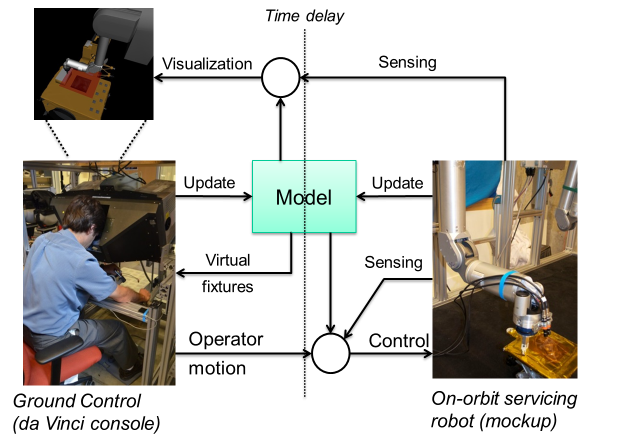

We adopted a model-based approach (see Figure), where the operator first creates a model of the environment, which enables both improved visualization (e.g., augmented virtuality) and control (e.g., virtual fixtures). We are working to incorporate dynamic simulation to update the model as the remote environment changes.

We investigated two approaches to gaining access to the satellite fill/drain valves, based on two different satellite configurations for two NASA missions:

- Using a flat blade, similar to a seam ripper, to cut the tape that secures an MLI patch against the surface of the satellite. This is based on the experimental setup for NASA’s Robotic Refueling Mission (RRM).

- Using a rotary cutting blade to cut through three sides of an MLI “hat”, such as the one on Landsat 7. This is based on NASA’s design for the OSAM-1 mission (previously, Restore-L).

Research in Model-Based Control (RRM setup)

Our research in model-based control was performed with the RRM setup, where a sharp blade (similar to a seam ripper) is used to cut the Kapton tape that holds the MLI patch over the access panel. The primary cutting strategy is to press the blade normal to the MLI surface (which is supported by a rigid part of the satellite) and slide tangential to the surface to cut the tape. This enables the use of virtual fixtures on the master console and hybrid position/force control on the slave robot. We demonstrated this approach on a ground-based mockup, where we used the master console of an open-source research version of the da Vinci Surgical Robot to teleoperate the remote robot, which was a Whole Arm Manipulator (WAM) robot at JHU or a Motoman robot at GSFC or the West Virginia Robotic Technology Center (WVRTC). We performed several multi-user studies with the JHU testbed, with Institutional Review Board (IRB) approval, as described below.

We first created an augmented reality user interface, presented at IROS 2012, to enable the operator to define a virtual fixture plane on the master console to represent the satellite surface. In later work, presented at ICRA 2015, we developed a method to enable an operator to more accurately align the virtual plane by dynamically texturing the virtual plane with the purpose of adding visual distortion until the virtual plane is accurately aligned with the real one. Once the virtual plane is defined, it provides haptic feedback to the operator and prevents the operator from moving beyond the plane or from changing the orientation of the cutter with respect to the plane normal (rotation within the plane; i.e., around the plane normal, may be permitted). On the remote robot, the user-specified plane determines the task frame for hybrid position/force control; specifically, the plane normal defines the direction for force control. Thus, while the operator interacts with the simulated environment, the remote robot uses sensor-based control to attempt to reproduce this simulation, as presented at ICRA 2013. We validated this approach in our first multi-user study, which was presented at ICRA 2015. We also developed a method to update the task frame (i.e., alignment of the plane model) used for hybrid position/force control based on the actual trajectory followed by the robot, which is necessary to ensure that the cutter remains correctly oriented with the plane. This required a method to estimate the surface compliance to compensate for variations in the normal force. The developed method and results were presented at ICRA 2016.

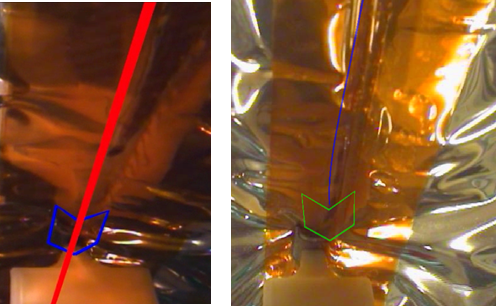

In addition to the virtual fixture plane, we tested several virtual fixtures to better constrain motion on the plane. The left figure shows a line virtual fixture where the operator adjusts the line by pressing the clutch pedal (to temporarily disengage teleoperation) and then uses the master manipulator to change the orientation of the line with respect to the plane (i.e., a “masters as mice” interaction). We also created a non-holonomic constraint (NHC), which is similar to the line VF except that the operator adjusts the line direction by “steering” (similar to driving a car) and a non-holonomic virtual fixture (NHVF), where the operator is able to deviate from the line, but the system applies a virtual fixture to help steer back toward the line. The right figure shows a visualization of the NHVF, where the curved blue line depicts the VF guidance path back toward the line. We performed multi-user trials of these virtual fixtures and presented results at IROS 2015.

As shown in the top figure, we developed a Task Monitor to enable the remote robot to detect cutting failures to avoid having to wait for the operator to recognize the problem in the delayed video feedback and provide corrective action via time-delayed control. We experimentally determined that the expected force in the direction of cutting could be sufficiently described by kinetic friction plus a constant force due to the cutting process. This simple model should be feasible to evaluate even with the limited computational resources available in space. The concept is that the on-orbit robot system would use the model to estimate the expected force in the direction of cutting, and stop motion if the measured force is significantly higher or lower than the expected force. Initial results were published in Haptics 2014, followed by an improved method that included an on-line estimation of the model parameters (i.e., friction and cutting force) presented at IROS 2015.

Publications (RRM setup)

Task Frame Estimation during Model-Based Teleoperation for Satellite Servicing Proceedings Article

In: IEEE Intl. Conf on Robotics and Automation (ICRA), pp. 2834-2839, Stockholm, Sweden, 2016.

Parameter Estimation and Anomaly Detection while Cutting Insulation during Telerobotic Satellite Servicing Proceedings Article

In: IEEE/RSJ Intl. Conf. on Intelligent Robots and Systems (IROS), pp. 4562-4567, Hamburg, Germany, 2015.

Preliminary Study of Virtual Nonholonomic Constraints for Time-Delayed Teleoperation Proceedings Article

In: IEEE/RSJ Intl. Conf. on Intelligent Robots and Systems (IROS), pp. 4244-4250, Hamburg, Germany, 2015.

Registration of planar virtual fixtures by using augmented reality with dynamic textures Proceedings Article

In: IEEE Intl. Conf. on Robotics and Automation (ICRA), pp. 4418-4423, 2015.

Experimental Evaluation of Force Control for Virtual-Fixture-Assisted Teleoperation for On-Orbit Manipulation of Satellite Thermal Blanket Insulation Proceedings Article

In: IEEE Intl. Conf on Robotics and Automation (ICRA), pp. 4424-4431, Seattle, WA, 2015.

Strategies and models for cutting satellite insulation in telerobotic servicing missions Proceedings Article

In: IEEE Haptics Symposium, pp. 467-472, Houston, TX, 2014.

Model-Based Telerobotic Control with Virtual Fixtures For Satellite Servicing Tasks Proceedings Article

In: IEEE Intl. Conf. on Robotics and Automation (ICRA), pp. 1479-1484, Karlsruhe, Germany, 2013.

Augmented Reality Environment with Virtual Fixtures for Robotic Telemanipulation in Space Proceedings Article

In: IEEE/RSJ Intl. Conf. on Intell. Robots and Systems (IROS), pp. 5059-5064, Vilamoura, Portugal, 2012.

Research in Model-Based Visualization (OSAM-1 setup)

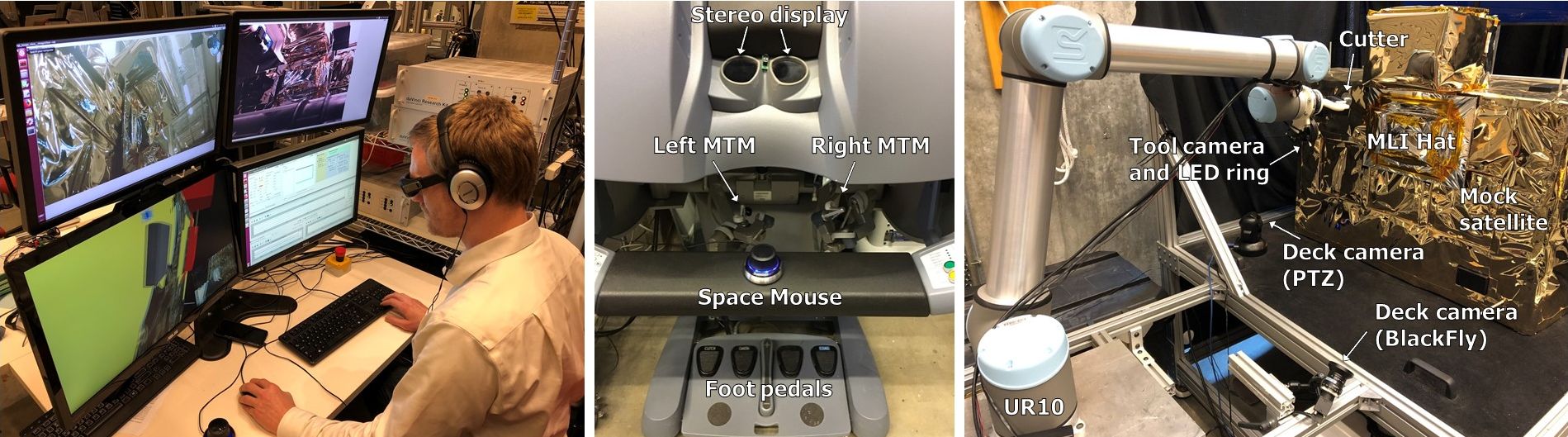

The OSAM-1 mission requires the servicing robot to cut through an MLI “hat” to gain access to the satellite fill/drain valves. The sides of this hat are not supported by an underlying stiff structure and thus it is not possible to use hybrid position/force control on the slave robot as in the RRM setup. We therefore focused our efforts on creating models to support improved visualization of the remote environment and assistance in performing the cutting task on the master console.

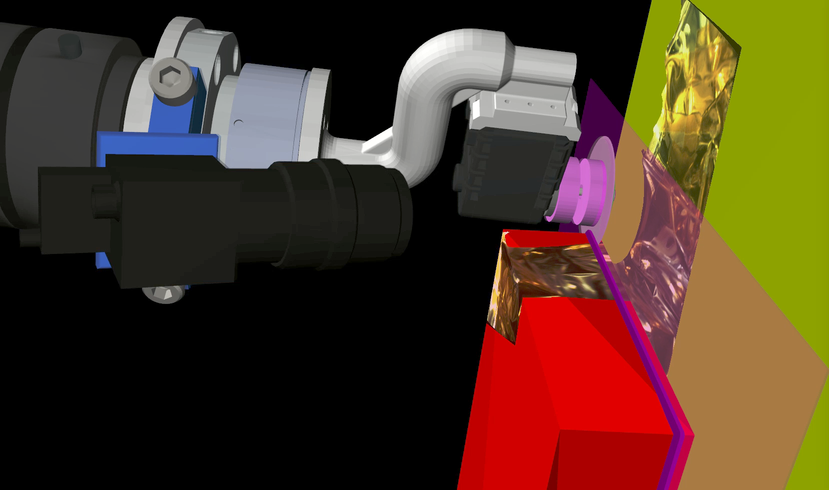

The RRM research testbed utilized an augmented reality visualization where virtual objects, such as graphical representations of the virtual fixtures or feedback from the remote task monitor, are overlayed on the delayed video images. In addition to the problems due to the time delay, this approach restricts the interface to one of the available camera views, which is often sub-optimal and unintuitive because it is frequently provided by a camera mounted on the robot end-effector (i.e., an “eye in hand” configuration). Thus, we developed an augmented virtuality visualization, where the operator primarily visualizes the 3D model of the scene, which can be presented in stereo and from any perspective. This model is augmented by projections of the live (delayed) video onto the 3D model.

As shown in the figure below, our experimental setup uses either the da Vinci master console or a conventional keyboard/GUI interface to teleoperate a UR5 or UR10 robot equipped with a rotary cutting tool. For our development and testing, we are using a small-scale satellite model that was built at JHU.

Publications (OSAM-1 setup)

Teleoperation and Visualization Interfaces for Remote Intervention in Space Journal Article

In: Frontiers in Robotics and AI, vol. 8, 2021.

Interactive Planning and Supervised Execution for High-Risk, High-Latency Teleoperation Proceedings Article

In: IEEE/RSJ Intl. Conf. on Intelligent Robots and Systems (IROS), pp. 1857-1864, 2020.

Visual Monitoring and Servoing of a Cutting Blade during Telerobotic Satellite Servicing Proceedings Article

In: IEEE/RSJ Intl. Conf. on Intelligent Robots and Systems (IROS), pp. 1903-1908, 2020.

Experimental Evaluation of Teleoperation Interfaces for Cutting of Satellite Insulation Proceedings Article

In: IEEE Intl. Conf. on Robotics and Automation (ICRA), pp. 4775-4781, 2019.

Scene Modeling and Augmented Virtuality Interface for Telerobotic Satellite Servicing Journal Article

In: IEEE Robotics and Automation Letters, vol. 3, no. 4, pp. 4241-4248, 2018.

Augmented Virtuality for Model-Based Teleoperation Proceedings Article

In: IEEE/RSJ Intl Conf. on Intelligent Robots and Systems (IROS), pp. 3826-3833, Vancouver, Canada, 2017.